The live demo repo for this series is 67ailab/harness-engineering, and for this post I did add a real repo capability before publishing. The repo now includes a workflow export layer in src/harness_engineering/workflow.py, plus a workflow CLI command in src/harness_engineering/cli.py that renders the current harness orchestration as structured JSON or Mermaid. That change shipped in commit a007c08.

That may sound like a documentation flourish. It is not. The point of an orchestration post is not to wave vaguely at boxes and arrows. It is to make the runtime’s control structure explicit enough that you can inspect it, reason about it, and argue about whether it is the right one.

This is the practical claim of the article: orchestration pattern choice determines the failure modes, approval surfaces, trace shape, and operational clarity of an agent system more than most teams realize. If you pick the wrong pattern, the model may still look clever in a demo while the system becomes hard to debug, hard to govern, and weirdly expensive.

The current repo is still intentionally small. That is useful here. Small code makes orchestration visible.

What changed in the repo since the previous post

The new file is src/harness_engineering/workflow.py. It exposes two concrete functions:

build_workflow_definition()workflow_to_mermaid()

Those functions export the current runner’s orchestration as data rather than prose. The exported graph includes:

- nodes

- transitions

- approval gates

- risky steps

- terminal states

- the declared current implementation style:

linear_state_machine_with_approval_gate

The command surface changed too. src/harness_engineering/cli.py now adds:

cmd_workflow()- a

workflowsubcommand with--format json|mermaid

And the repo now tests that capability in tests/test_harness.py, including checks that:

waiting_approvalis marked as an approval gatefinalize_reportis marked risky- terminal states include

doneandfailed - Mermaid output contains the approval transition from

waiting_approvaltofinalize_report

That is a small feature, but it earns its keep. It lets the post talk about orchestration using the real shape of the current harness rather than a made-up architecture sketch.

The current demo is a loop, not a graph runtime

Start with what the repo actually is.

The main orchestration logic still lives in HarnessRunner.run_until_pause_or_complete() in src/harness_engineering/runner.py. That method advances the run through a hand-written sequence:

search_mockextract_factsdraft_reportfinalize_reportdone

It also owns the failure branches and the approval stop.

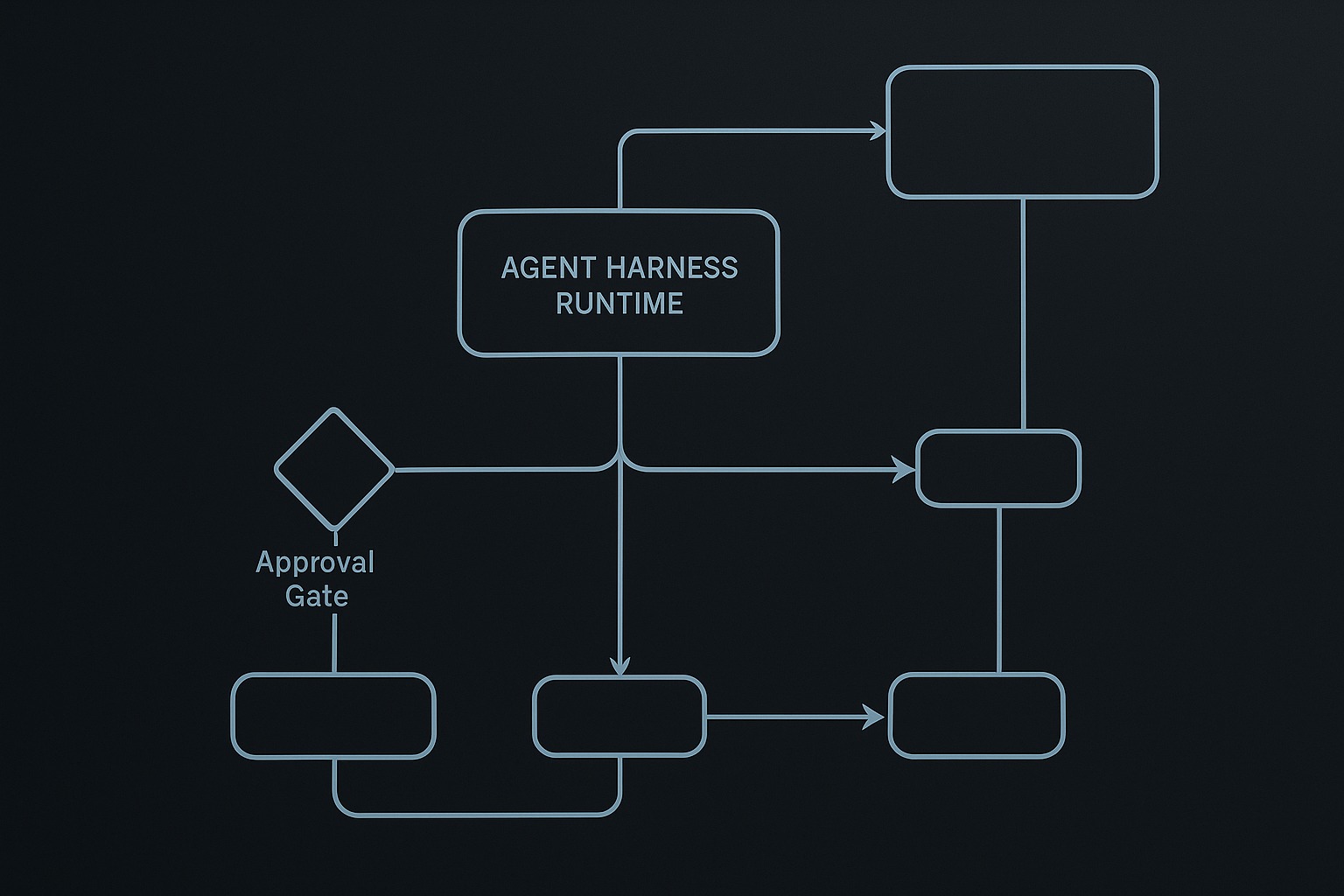

That means the current harness is best described as a linear state machine with an approval gate, not as a general-purpose graph engine. The export in build_workflow_definition() makes that explicit by naming the current implementation exactly that.

This matters because teams often overstate what they have. A lot of “graph-based” agent systems are just loops with a few conditional branches. That is not an insult. It is often the right design. But you should say what it is.

In this repo, the orchestration truth is easy to trace in code:

HarnessRunner.create_run()creates a persistedRunStateHarnessRunner._execute()wraps tool calls with trace emission and retry behaviorHarnessRunner.run_until_pause_or_complete()drives progressionHarnessRunner.approve()andHarnessRunner.resume()mediate the human checkpoint

The new workflow export simply turns that already-existing structure into something inspectable.

Why orchestration pattern choice matters

If you strip away branding and framework syntax, most production agent systems lean on a handful of orchestration patterns:

- loop / state machine

- graph / workflow DAG or state graph

- manager-worker orchestration

- handoffs between specialists

These are not just software architecture labels. They change:

- who owns the next decision

- where state is persisted

- where approvals attach

- what traces look like

- how resumability works

- how expensive misfires become

Anthropic’s practical guidance on effective agents makes a useful distinction here: workflows are predefined code paths, while agents are systems where the model dynamically directs its own processes and tool usage. That distinction is helpful as long as you do not turn it into theology. In real systems, most useful runtimes combine both. You keep some parts explicit and let the model steer inside bounded areas.

The harness-engineering repo is currently on the workflow-heavy side of that line. That is a feature, not a failure. It lets us inspect the tradeoff clearly.

Pattern 1: the loop or explicit state machine

This is the current repo’s home territory.

A loop-based harness says: there is one runtime in charge, and it advances through a small set of named states. The model can help produce artifacts, plans, or reviews, but the application still owns the control flow.

In src/harness_engineering/runner.py, run_until_pause_or_complete() is doing exactly that. It decides what happens when:

search_mockfails after retryextract_factssucceeds- draft review passes

- approval has not yet been granted

- final artifact writing completes

The key advantage of this pattern is clarity.

You can answer questions like:

- What step is next?

- What condition causes failure?

- What exact state means “waiting for approval”?

- What side effect is risky?

And you can answer them without reading model output.

The current RunState model in src/harness_engineering/models.py supports this nicely. Fields like current_step, status, requires_approval, approved, and pending_action are explicit orchestration state, not accidental byproducts.

That combination is why the current demo is easy to reason about operationally.

Where loops shine

Loops or state machines are usually the best fit when:

- the workflow stages are known in advance

- there are only a few branching points

- human approval needs to be attached to named transitions

- resumability matters more than flexibility theater

- you want obvious traces and small code

That is why a loop is a good starter pattern for harness engineering. It is boring in the best way.

Where loops start to hurt

They get awkward when:

- branches multiply

- several steps can run independently

- specialist subtasks need different prompts or policies

- the number of sub-operations is dynamic per request

- the runtime needs richer concurrency or fan-out

That is the point where teams start reaching for graph runtimes or manager-worker schemes.

Pattern 2: graphs and explicit workflow topology

A graph says: stop pretending the workflow is just “some code in a loop.” Make nodes and edges first-class.

That does not automatically mean you need a graph runtime. The repo’s new workflow.py is a good example of the intermediate step: graph visibility without graph execution.

build_workflow_definition() returns a structure with nodes like:

initsearch_mockextract_factsdraft_reportwaiting_approvalfinalize_reportdonefailed

And transitions like:

draft_report -> waiting_approvalonreview_passedwaiting_approval -> finalize_reportonapproval_grantedfinalize_report -> failedontool_error

That is already useful engineering. Once the flow is data, you can:

- inspect it from the CLI with

PYTHONPATH=src python3 -m harness_engineering.cli workflow --pretty - render it as Mermaid with

PYTHONPATH=src python3 -m harness_engineering.cli workflow --format mermaid - compare the declared graph to the actual runner behavior

- build future docs and tests against the orchestration structure

This is also why graph tools like LangGraph resonate with engineers. The attraction is not the word “graph.” It is that graph-shaped workflows make branching, conditional routing, and parallel structure legible.

But graph form is not free.

What graphs improve

Graphs help when:

- the workflow has multiple meaningful branches

- you want explicit conditional edges

- different nodes own different state updates

- visualization itself helps debugging or governance

- fan-out/fan-in patterns are central

LangGraph’s docs show this clearly in prompt chaining, conditional gates, and parallelization examples. The graph is not just pretty; it makes dependencies and join points visible.

What graphs do not magically fix

A graph runtime does not solve:

- approval policy

- deterministic replay

- retry design

- schema discipline

- model brittleness

- trace interpretation

You can absolutely have a graph-shaped mess.

That is why I like the repo’s current incremental move. It exports the graph view of the harness before pretending the harness has become a universal graph engine.

Pattern 3: manager-worker orchestration

This pattern says: one controller stays in charge and delegates bounded subtasks to specialists.

OpenAI’s current orchestration guidance frames this as “agents as tools” or a manager retaining ownership while calling specialists as bounded capabilities. Anthropic describes a related orchestrator-workers pattern where a central model dynamically breaks down work, delegates it, and synthesizes results.

This pattern is often the sweet spot for practical systems because it preserves one outer control surface.

You keep:

- one approval policy root

- one trace owner

- one final response owner

- one stable entry and exit path

but you gain specialist decomposition.

The harness-engineering repo is not yet a real manager-worker runtime, but it already hints at the shape. The split in src/harness_engineering/reviewer.py between create_plan_from_env() and review_from_env() shows specialized roles, even though the main orchestration still lives in HarnessRunner.

That is a useful distinction. Role separation is not the same thing as multi-agent orchestration. Right now the repo has specialized model-assisted functions inside a single controlling harness, not a fleet of peer agents negotiating control.

That is probably the right design at this stage.

Why manager-worker is often underrated

It works well when:

- you need specialization but not identity drama

- the final answer should still be synthesized centrally

- tool permissions differ by subtask

- you want clear nested traces instead of conversational baton-passing

Manager-worker is usually easier to audit than handoffs because somebody clearly remains responsible.

Pattern 4: handoffs

Handoffs are different. Control moves from one specialist to another.

OpenAI’s orchestration docs put this cleanly: handoffs fit when a specialist should own the next branch of the conversation rather than just help behind the scenes. That is a real pattern, but it is a more dangerous one than many demos admit.

Once ownership moves, several questions get harder:

- which agent owns approvals now?

- what history does the receiving agent see?

- does policy travel with the handoff or reset?

- who produces the final user-facing answer?

- how do you explain the boundary in the trace?

That is why I do not think handoffs should be the default pattern for most engineering harnesses. They are useful when specialization truly implies ownership transfer. They are not useful when teams just want to sound more agentic.

The current repo wisely does not do handoffs. It would complicate the demo faster than it would improve it.

The approval gate is the best orchestration lesson in the repo

The most instructive part of the demo is not the search step or the markdown drafting. It is the approval stop between review and final file write.

Here is the sequence that matters in run_until_pause_or_complete():

draft_reportrunsreview_from_env()evaluates the draft- if review passes, the runner does not write to disk

- instead it sets:

current_step = "finalize_report"requires_approval = Truepending_action = "finalize_report"status = "waiting_approval"

- execution stops until

approve()andresume()are called

That is orchestration doing real work.

A weaker system would treat approval as a chat convention. This repo treats it as a state transition.

The new workflow export makes that visible by modeling waiting_approval as its own node and by labeling the edge to finalize_report with approval_granted.

That one design choice is a good reminder that orchestration is not just about task decomposition. It is also about control boundaries.

Traces change shape depending on orchestration

Another reason orchestration pattern choice matters: it determines whether traces will be legible.

In this repo, traces remain readable because there is one controlling runner and a modest number of state transitions. HarnessRunner._execute() emits tool_start, tool_ok, and tool_error, while the outer loop records events like draft_reviewed, approval_required, approval_granted, and run_completed.

That event vocabulary works because the orchestration structure is simple.

Now imagine the same task implemented as:

- free-form handoffs between specialists

- recursive tool loops

- opportunistic retries spread across several layers

The trace gets much harder to interpret unless the orchestration model is extremely disciplined.

This is one reason I think teams should choose the simplest orchestration pattern that matches the problem. Complexity compounds in observability long before it becomes obvious in demos.

What the demo proves

This repo does not prove that one orchestration pattern is universally best. It proves a narrower and more useful set of claims.

1. A small explicit loop can be the right orchestration pattern

The current harness shows that a hand-written state machine in HarnessRunner.run_until_pause_or_complete() is enough to deliver retries, approval gating, tracing, pause/resume, and final artifact writing.

2. Making orchestration inspectable is already a meaningful capability

build_workflow_definition(), workflow_to_mermaid(), and cmd_workflow() turn the harness topology into something reviewable. That alone improves docs, debugging, and future extension planning.

3. Approval is an orchestration primitive, not a UI courtesy

The waiting_approval node in workflow.py mirrors a real runtime boundary in runner.py. That is exactly the sort of thing many “agent” demos hand-wave away.

4. Role separation does not require full multi-agent control transfer

create_plan_from_env() and review_from_env() show that you can use specialized model roles without turning the whole harness into a peer-agent handoff system.

What it still does not solve

This is where honesty matters.

1. The repo still executes one concrete orchestration style

The new graph export does not mean the runtime can execute arbitrary graphs. It is a view of the current implementation, not a general graph scheduler.

2. There is no true parallelism or dynamic worker fan-out yet

The repo does not yet implement parallel branches, worker pools, or orchestrator-generated subtask expansion. So this post can discuss those patterns, but it cannot claim the demo already supports them.

3. Handoffs are discussed, not implemented

There is no peer-agent takeover model in the code today. That is probably correct for now, but it means the article must stay clear about the boundary between pattern analysis and shipped capability.

4. Reviewer robustness is still a known weak point

review_markdown() in src/harness_engineering/reviewer.py still expects clean JSON from the model-backed reviewer path. As earlier runs already showed, local models can return fenced JSON or extra prose. That brittleness is orthogonal to orchestration, but it affects real execution.

5. Graph visibility is not the same as durable replay semantics

Temporal’s workflow documentation is useful here because it shows what durable execution really entails: recorded event history, deterministic replay, and separation between workflow code and side-effecting activities. The demo repo persists state and traces, but it is not yet a Temporal-style durable execution engine.

The practical selection rule

If you are designing an agent harness, the orchestration question is usually simpler than people make it sound.

Use a loop / state machine when the path is known, approval points are explicit, and operational clarity matters most.

Use a graph when branching structure, conditional edges, or fan-in/fan-out are central enough that you need topology to be first-class.

Use manager-worker when one runtime should still own the answer and policy, but specialist subtasks materially improve capability or isolation.

Use handoffs only when a specialist genuinely needs to take over ownership of the next branch.

And in all four cases, insist on the same baseline questions:

- Where does state live?

- Where do risky actions stop?

- Who owns the next decision?

- What does the trace look like after failure?

- Can the run resume coherently?

If you cannot answer those questions, the orchestration pattern is not really chosen yet. It is just happening to you.

Why this repo change was the right one before publishing

I did not want to publish an orchestration article that only described patterns in the abstract. The repo needed one concrete new capability that made orchestration easier to inspect.

The workflow export does that without pretending the demo is bigger than it is. It keeps the real execution path in runner.py, but it gives readers a stable artifact they can inspect from the command line and compare against the code.

That is the right kind of evolution for this series:

- small

- real

- test-covered

- architecturally meaningful

- honest about scope

The next orchestration step, if the repo needs one later, should probably be a true alternate execution mode or a narrowly scoped manager-worker path. But that should come only when the code genuinely needs it.

Right now the better lesson is simpler: before you add more agent behavior, make the control structure visible.

References

- 67 AI Lab,

harness-engineeringrepository: https://github.com/67ailab/harness-engineering - Anthropic, “Building effective agents”: https://www.anthropic.com/engineering/building-effective-agents

- OpenAI API docs, “Orchestration and handoffs”: https://developers.openai.com/api/docs/guides/agents/orchestration

- OpenAI Agents SDK docs, “Agents”: https://openai.github.io/openai-agents-python/agents/

- LangGraph docs, “Workflows and agents”: https://docs.langchain.com/oss/python/langgraph/workflows-agents

- Temporal docs, “Temporal Workflow”: https://docs.temporal.io/workflows