Radiotherapy contouring is entering a new phase. For years, progress was driven mainly by image segmentation: better backbones, larger datasets, and stronger 3D architectures improved the automatic outlining of visible anatomy. That approach remains highly effective for organs-at-risk (OARs), where the task is largely to identify and delineate structures that can be seen directly on imaging.

Target contouring is different. Gross tumor volume (GTV), clinical target volume (CTV), nodal target volumes, and postoperative beds are not defined by pixels alone. They are shaped by disease extent, stage, pathology, surgical status, laterality, risk patterns of spread, institutional practice, and protocol logic. In real clinical workflow, radiation oncologists do not contour from images alone; they contour from images interpreted in context.

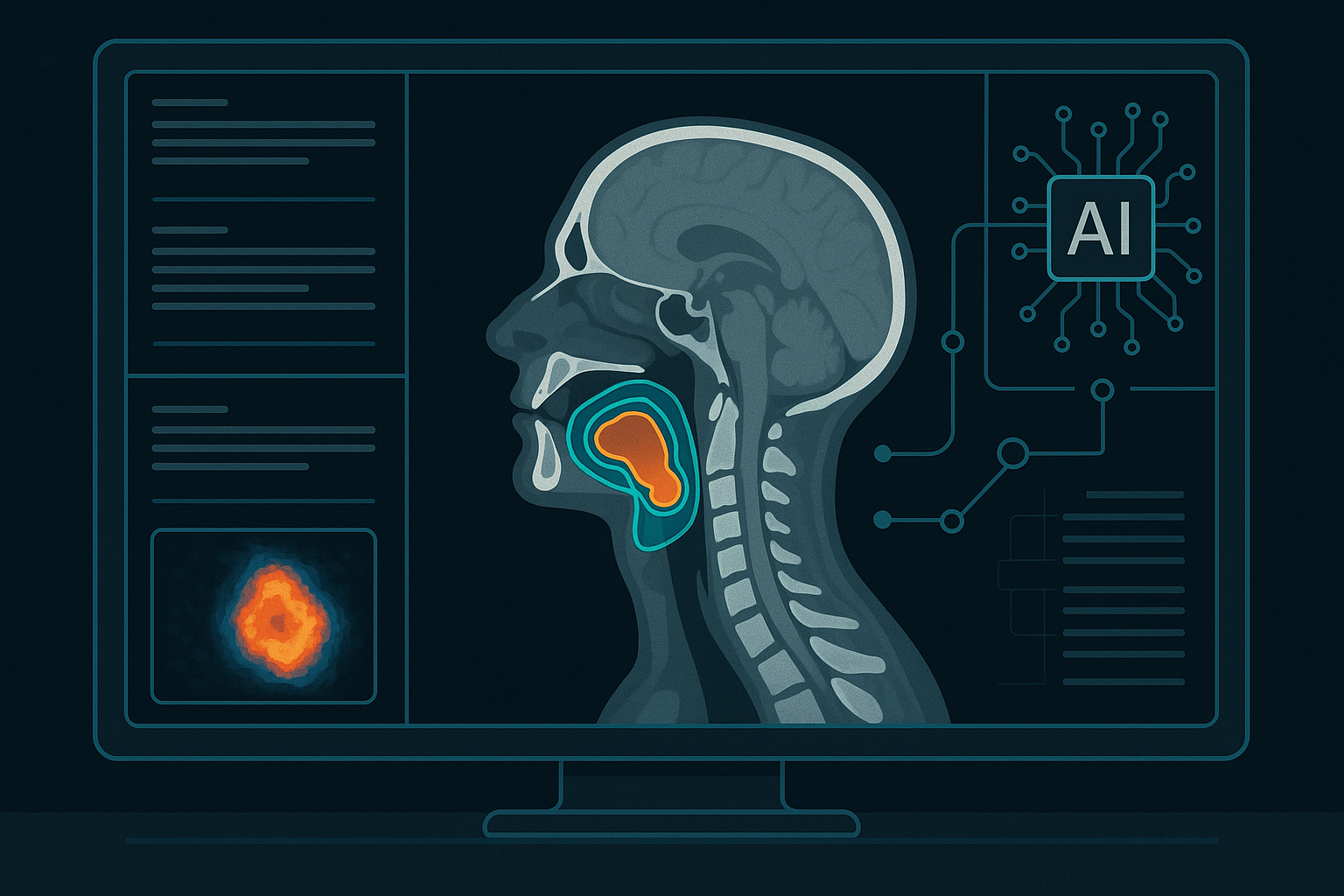

That is why recent work has started to move beyond pure vision-only segmentation toward multimodal, LLM-augmented, and visual-language contouring systems. The important point is not that a generic chatbot can suddenly replace a task-specific contouring model. Rather, the field is increasingly converging on architectures that combine volumetric imaging with text-derived clinical context, then use fusion mechanisms to generate more clinically grounded target volumes.

This article reviews the current state of the art in LLM- and VLM-related contouring, outlines the major gaps and challenges, and discusses where the next opportunities may emerge.

1. State of the art of LLM for contouring

1.1 Why contouring is not a pure image problem

The distinction between OAR contouring and target contouring is foundational.

For OARs, the task is usually anatomically anchored: the question is where the organ begins and ends on the scan. Although there are still challenges around small structures, poor contrast, and interobserver variability, the problem is fundamentally a visual segmentation problem.

For targets, the question is broader. A clinically appropriate target may depend on:

- visible gross disease on planning CT

- additional evidence from MRI or PET

- tumor stage and nodal status

- pathology and histology

- operative findings and postoperative anatomy

- protocol-driven elective coverage rules

- physician intent and local institutional standards

As a result, target delineation benefits from models that can integrate both imaging and non-imaging context. This is the core motivation behind multimodal and LLM-augmented contouring research.

1.2 Emerging design pattern: 3D vision plus clinical language context

Across recent papers, a common pattern is starting to emerge:

- a 3D image encoder over planning CT, often with optional PET or MRI inputs

- a text encoder or LLM-derived representation built from reports, staging details, pathology, or structured clinical notes

- a fusion mechanism, often based on cross-attention or visual-language interaction

- a contouring decoder specialized for GTV, CTV, nodal volumes, or related target structures

This pattern is meaningfully different from simply prompting a general-purpose multimodal chatbot with a scan. The leading research direction still looks task-specific and architecture-aware. The LLM or VLM component is typically used to encode or condition on clinical context rather than to perform contouring alone.

1.3 Medformer: multimodal learning for treatment target delineation

One of the clearest examples is Auto-delineation of Treatment Target Volume for Radiation Therapy Using Large Language Model-Aided Multimodal Learning, which introduced the Medformer framework for radiation therapy target volume delineation.

What stands out in this line of work is the explicit use of multimodal learning to improve target contouring rather than generic organ segmentation. The paper reports gains from integrating image features with clinically relevant text-rich context.

According to the published abstract, reported improvements include:

- prostate GTV DSC of 0.81 ± 0.10 versus 0.72 ± 0.10 for the baseline

- prostate GTV IoU of 0.73 ± 0.12 versus 0.65 ± 0.09

- prostate GTV HD95 of 9.86 mm versus 19.13 mm

- oropharyngeal GTV DSC of 0.77 ± 0.16 versus 0.72 ± 0.16

- CTV DSC around 0.91 across the evaluated settings

These results are notable because they support the broader thesis that target contouring improves when the model has access to clinically meaningful context in addition to imaging.

1.4 Radformer: visual-language target volume auto-delineation

A second important example is Radformer, described as a visual language model-based RT target volume auto-delineation network.

Radformer combines a hierarchical vision transformer with LLM-derived text features from clinical data and introduces a visual-language attention module. In the reported evaluation on a large head-and-neck radiotherapy cohort, the model showed substantial improvements over a baseline segmentation approach.

The reported test performance includes:

- GTV DSC of 0.76 ± 0.09 versus 0.66 ± 0.09

- IoU of 0.69 ± 0.08 versus 0.59 ± 0.07

- HD95 of 7.82 ± 6.87 mm versus 14.28 ± 6.85 mm

The broader significance of Radformer is architectural. It treats contouring as a visual-language problem, not merely a vision problem with a small amount of tabular metadata attached.

1.5 LLMSeg: multimodal contouring framed explicitly as a clinical-context problem

The Nature Communications paper on LLMSeg is perhaps the most direct articulation of where the field is heading. It argues that while OAR contouring often depends mainly on what is visible, target volume contouring depends on disease- and patient-specific context such as stage, histology, metastatic extent, age, performance status, and other variables.

LLMSeg therefore combines a 3D segmentation backbone with LLM-derived clinical text conditioning and image-text interaction modules. This is not an incidental use of language. It is a recognition that the correct contour often depends on information outside the image itself.

Conceptually, this is a strong step forward for radiation oncology AI. It moves contouring closer to the real cognitive process used by clinicians, where images are interpreted together with reports, disease characteristics, and treatment intent.

1.6 What “state of the art” means in practice

The field is still early, and the phrase “state of the art” should be used carefully. There is not yet a single universally dominant model family for target contouring in the way that some benchmark-driven areas of machine learning eventually develop.

A more accurate reading of the literature is this:

- the research frontier is shifting toward multimodal, context-aware target contouring

- the strongest systems are still specialized medical models rather than generic foundation-model chat interfaces

- language is increasingly being used as a conditioning and representation layer for clinically important context

- clinician review remains essential, and the best near-term systems are likely to be assistive rather than autonomous

In other words, the field is not replacing segmentation with language. It is augmenting segmentation with language-derived context where contouring logic demands it.

2. Gaps and challenges

Despite the promise of multimodal contouring, several hard problems remain open.

2.1 Ground truth is inherently noisy

Radiotherapy targets are famous for interobserver variability. Even among experienced clinicians, contouring decisions may differ because of judgment, school of practice, protocol interpretation, or local habit. That means the training label itself is often uncertain.

This has two consequences. First, model performance may reflect agreement with one institution’s style rather than universal correctness. Second, gains in metrics such as Dice may overstate clinical value if the underlying label standard is unstable.

2.2 Generalization across centers is difficult

External validation remains a major barrier. Differences in scanner protocols, patient populations, disease mix, contouring conventions, treatment planning workflows, and annotation standards can degrade performance when a model is moved from one site to another.

For contouring systems intended for real-world deployment, robustness across institutions matters as much as headline performance on an internal test set.

2.3 Multimodal fusion is still immature

Combining imaging, reports, staging information, pathology, and protocol text is conceptually appealing, but technically difficult. Clinical text can be incomplete, inconsistent, or noisy. Structured variables may be missing. Imaging modalities may not align cleanly. Fusion mechanisms may overemphasize certain signals or fail to model uncertainty properly.

The question is not only whether more modalities help, but how to fuse them in a way that is stable, interpretable, and clinically safe.

2.4 Language hallucination introduces a new risk surface

Language models are powerful contextual encoders, but they also introduce failure modes. If a system infers disease extent too aggressively from text, or produces fluent rationale unsupported by imaging and actual clinical evidence, it can become persuasive in the wrong direction.

In radiotherapy, that is not a cosmetic problem. A plausible-sounding but incorrect contour rationale could make errors harder to detect, not easier.

2.5 3D anatomical reasoning remains challenging

Many language-centric multimodal systems are strongest on text and 2D reasoning. Radiation oncology contouring, however, is a 3D spatial problem that demands anatomical continuity, boundary consistency, and volumetric reasoning.

Any serious production system will need a strong volumetric foundation. The language component may improve context handling, but it does not remove the need for rigorous 3D modeling.

2.6 Better contour metrics do not automatically mean better patient care

A higher Dice score is useful, but it is not the final endpoint. The clinically relevant questions are broader:

- does the contour reduce physician editing time?

- does it improve peer-review acceptance?

- does it lead to better planning consistency?

- does it improve dose distributions or downstream workflow quality?

- does it maintain safety across edge cases and unusual anatomies?

The field still needs stronger evidence connecting multimodal contouring gains to practical and clinical outcomes.

2.7 Regulation, auditability, and trust remain unresolved

Deploying multimodal contouring in clinical settings will require more than a strong model. It will require auditable behavior, clear provenance of inputs, robust logging, fail-safe human review, and a defensible validation framework.

The more a system presents itself as “reasoning” over reports and protocol text, the more important transparency becomes. Clinicians need to know what information was used, what assumptions were made, and where uncertainty remains.

3. Vision and opportunities

The most compelling future is not a generic chatbot drawing contours. It is a clinically grounded multimodal contouring assistant that combines strong segmentation with contextual reasoning, explanation, and iterative human review.

3.1 Contouring copilot rather than fully autonomous autopilot

A useful clinical assistant should do more than generate a mask. It should be able to:

- propose an initial target contour

- explain which clinical factors influenced the proposal

- highlight uncertain regions or disagreement areas

- accept iterative refinement instructions

- support review and QA rather than bypass them

This assistive model aligns better with actual radiotherapy workflow. Clinicians do not just want an answer; they want a contour they can inspect, edit, and trust.

3.2 Text-grounded nodal and elective volume generation

One of the highest-value opportunities is nodal CTV generation and other targets whose definition depends strongly on disease context and protocol logic.

These are precisely the cases where text-derived context matters most. Stage, laterality, nodal involvement, postoperative findings, and guideline rules all shape what should be included. A multimodal system that understands those inputs may offer meaningful value beyond conventional segmentation.

3.3 Interactive contour refinement

Another important opportunity is interactive refinement. In practice, clinicians often think in instructions such as:

- expand posteriorly

- exclude the air cavity

- respect the postoperative bed

- do not cross midline

- include the PET-avid node

This kind of iterative workflow is a natural fit for multimodal systems. It turns contouring from a one-shot prediction task into a collaborative editing loop.

3.4 Auto-QA, uncertainty estimation, and review support

Some of the strongest near-term commercial value may come from review support rather than primary generation. Multimodal systems could help:

- detect missing nodal levels

- identify anatomically implausible boundaries

- compare contours against protocol expectations

- flag low-confidence regions for focused review

- generate concise rationale for why a region was included or excluded

This is especially promising because it complements existing planning workflows instead of demanding complete replacement.

3.5 Protocol- and institution-aware adaptation

Contouring standards vary across hospitals, regions, trial groups, and physician teams. A major opportunity is to make systems adaptable to local contouring atlases and textual protocols without rebuilding the entire model stack from scratch.

Language-conditioned systems are attractive here because many clinical rules exist first in written guidance, not in neatly labeled datasets. The combination of image modeling and protocol-aware conditioning could become a practical path toward safer institution-specific deployment.

3.6 Beyond segmentation toward clinically aware multimodal systems

The longer-term opportunity is not simply to improve benchmark scores. It is to build systems that better reflect how clinicians actually reason about target definition.

That means combining:

- volumetric image understanding

- clinical-document understanding

- cross-modal grounding

- uncertainty estimation

- human-in-the-loop refinement

- traceable rationale generation

If this direction matures, the most valuable systems may not be those with the highest isolated segmentation metric, but those that best integrate into contouring workflow while improving consistency, safety, and efficiency.

4. References

- Kim CY, et al. Auto-delineation of Treatment Target Volume for Radiation Therapy Using Large Language Model-Aided Multimodal Learning. International Journal of Radiation Oncology, Biology, Physics / PubMed. https://pubmed.ncbi.nlm.nih.gov/39117164/

- Kamal MM, et al. Large Language Model-Augmented Auto-Delineation of Treatment Target Volume in Radiation Therapy (Radformer). PMC. https://pmc.ncbi.nlm.nih.gov/articles/PMC11261986/

- LLM-driven multimodal target volume contouring in radiation oncology (LLMSeg). Nature Communications. https://www.nature.com/articles/s41467-024-53387-y