The live demo repo for this series is 67ailab/harness-engineering, and for this post I did change the repo before publishing. The new repo commit is b9a60e8, which adds per-step timing metadata, lightweight workload and token estimates, and performance/cost rollups to the harness traces and summaries.

That change lives mainly in:

src/harness_engineering/models.pysrc/harness_engineering/runner.pysrc/harness_engineering/tracing.pysrc/harness_engineering/store.pytests/test_harness.pyREADME.md

The core additions are:

- new timing and metrics fields on

StepResultinmodels.py - wall-clock measurement inside

RetryPolicy.call()inrunner.py - step-level workload estimation in

HarnessRunner._estimate_step_metrics() - aggregated performance and cost rollups in

build_trace_summary()intracing.py - operator-facing rollups in

RunStore.build_summary()instore.py

This is the right place for Post 12 to land, because cost and latency problems in agent systems almost never come from one bad prompt. They come from system shape:

- too many round trips

- too many generated tokens

- no distinction between cheap and expensive steps

- no trace that explains where the time actually went

- no measurement surface for workload growth

- no visibility into retries, approvals, or slow reviewer/model calls

That is harness engineering.

What changed in the repo since the previous post

Post 11 was about policy, auth, and safe boundaries. That was necessary, but it did not yet give the harness a decent answer to another practical operator question:

Where is the time going, and what kind of work volume are we actually asking the system to do?

Before this run, the repo already had:

- explicit tool steps in

HarnessRunner.run_until_pause_or_complete() - persisted run state in

RunStore - raw trace events via

add_trace() - summary surfaces via

summary.jsonandtrace_summary.json

But the observability was still more structural than performance-oriented. You could tell what happened. You could not tell much about how expensive or how slow it was, except by eyeballing timestamps or reading raw artifacts.

So I added a small performance layer.

The repo now records, per executed step:

started_atfinished_atduration_msmetrics

Those fields live on StepResult in src/harness_engineering/models.py.

RetryPolicy.call() in src/harness_engineering/runner.py now wraps each tool call with perf_counter() timing and stamps the result with start/finish metadata.

Then HarnessRunner._estimate_step_metrics() derives lightweight engineering metrics from the step output and inputs, for example:

match_countforsearch_mockfact_countand output chars forextract_facts- estimated input/output token counts for

draft_report - bytes written for

finalize_report

Finally, build_trace_summary() in src/harness_engineering/tracing.py rolls this into:

- total run duration across steps

- per-tool total and average duration

- estimated model token volume

- provider grouping for model-generation steps

- a cost status like

local_or_mockorunpriced

And RunStore.build_summary() in src/harness_engineering/store.py exposes the operator-friendly version:

performance.total_step_duration_msperformance.average_step_duration_msperformance.slowest_stepcost.estimated_input_tokenscost.estimated_output_tokenscost.estimated_total_tokenscost.total_bytes_written

That is a modest feature set. Good. Modest is better than fake precision.

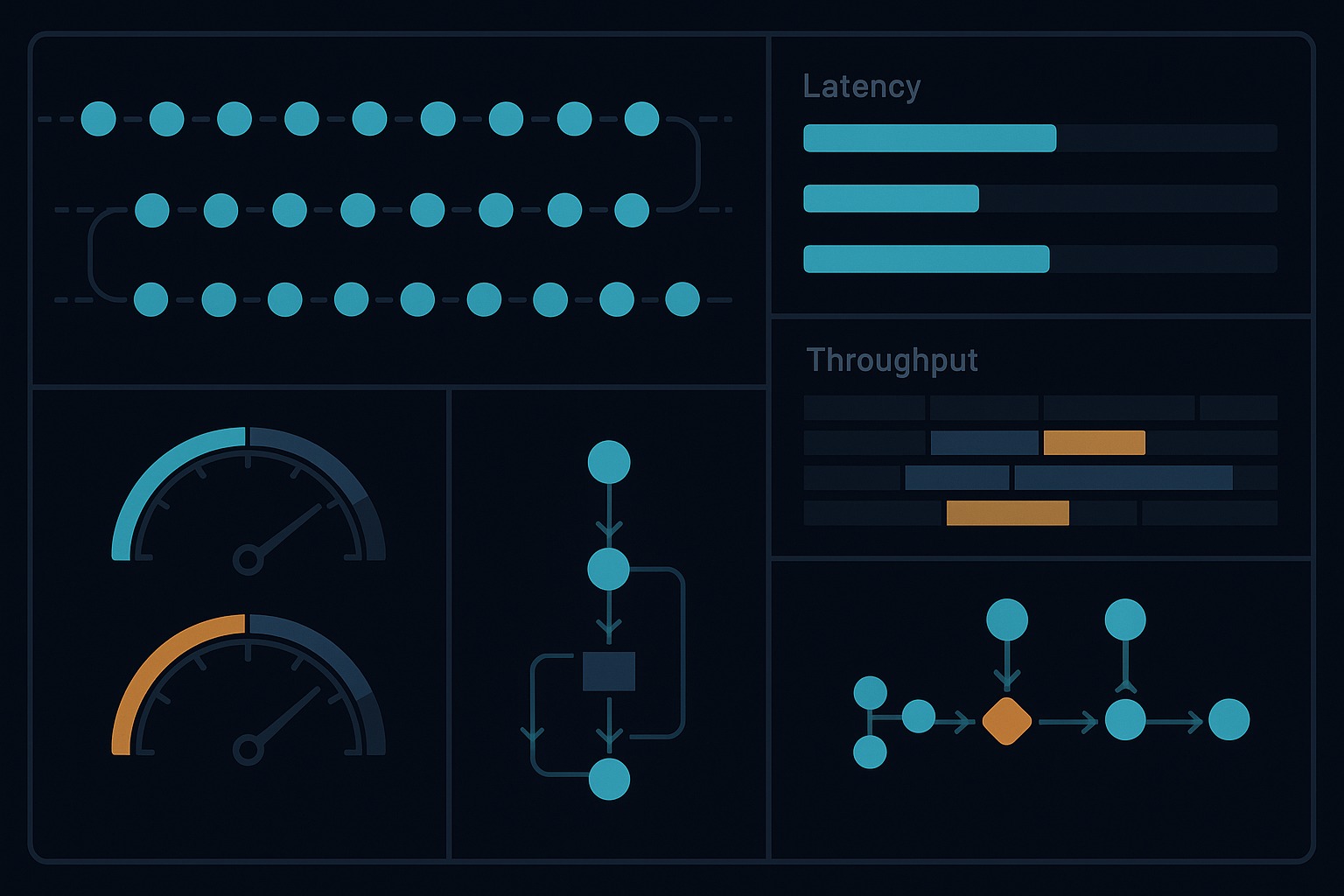

Why agent performance is mostly a harness problem

When people talk about LLM latency, they often collapse everything into model speed. Model speed matters, obviously. But in agent systems it is only one component.

The real user-visible latency is more like:

- request setup and network round-trip

- retrieval or tool latency

- model inference time

- retries after flaky steps

- serialization and validation costs

- approval pauses

- follow-up model calls caused by poor orchestration

The OpenAI latency optimization guide makes this point pretty clearly in a broader way: reduce requests, reduce output tokens, parallelize when possible, and do not default to an LLM for everything. That is not prompt advice. That is system design advice.

Likewise, Anthropic’s prompt caching docs are useful not because caching is magic, but because they highlight a harness reality: repeated prefixes and repeated context are infrastructure concerns. If your harness keeps re-sending giant stable prefixes, you have a performance architecture issue.

And on the serving side, projects like vLLM put serious effort into metrics and saturation visibility for exactly the same reason: throughput engineering requires a runtime view, not just a prompt view.

That is why I think “cost/latency/throughput engineering” belongs in a harness series and not in a generic prompting series.

The new measurement model in the repo

There are two choices in this update that I particularly like.

1. The repo measures wall-clock duration at the step boundary

The important unit is not “how long did Python take” in the abstract. It is “how long did this harness step take from the runtime’s point of view?”

That is why timing is attached to StepResult, not just printed in logs.

In RetryPolicy.call(), the harness records start time, finish time, and total elapsed time across retries. That means a flaky step does not look artificially cheap. If a tool succeeds on the second attempt, the latency cost of that retry is reflected in the step result.

That is a subtle but correct choice. Operators care about end-to-end step cost, not just successful-final-attempt cost.

2. The repo estimates workload, but does not pretend to know billing truth

I strongly prefer this over fake dollar math.

HarnessRunner._estimate_step_metrics() computes coarse token estimates for draft_report from character counts. That gives the harness a rough proxy for model work volume without claiming that character count equals provider billing count.

The trace summary then labels the cost state honestly:

local_or_mockwhen the draft step used the mock/local pathunpricedwhen it used an OpenAI-compatible provider but the harness does not know pricing

And the summary explicitly says these are engineering heuristics, not billing data.

That honesty matters.

Too many demos either track nothing, which is useless, or they invent precision, which is worse.

A real run from the updated repo

Before publishing, I ran the required checks in the repo:

cd /home/james/.openclaw/workspace/harness-engineering

make check

PYTHONPATH=src python3 -m harness_engineering.cli doctor

make check passed, including tests and secret scanning.

doctor also passed against the repo-local OpenAI-compatible endpoint with:

- provider:

openai_compatible - model:

gemma4 - base URL:

http://192.168.0.16:8080/v1 - status:

ok - message:

MODEL_OK

I then ran a repo-backed example with the new instrumentation:

PYTHONPATH=src python3 -m harness_engineering.cli start \

--topic "Cost latency throughput engineering for agent harnesses" \

--source-file sample_data/sources.json \

--runs-dir .runs-post12

That run produced a real and useful result, even though it did not complete successfully.

Run ID: bd6bbf2e-59dc-4688-a629-c808039e9f39

The summary showed:

status: failedcurrent_step: draft_reportduration_seconds: 29performance.total_step_duration_ms: 11397performance.slowest_step.tool_name: draft_reportcost.estimated_total_tokens: 447

The trace summary showed:

performance.total_duration_ms: 11397performance.by_tool.draft_report.total_duration_ms: 11397performance.by_tool.draft_report.providers: ["openai_compatible"]cost.estimated_input_tokens: 216cost.estimated_output_tokens: 231cost.estimated_total_tokens: 447cost.status: unpriced

That is exactly the kind of evidence I want from a demo. Not just “the model was slow,” but “which step was slow, how much work volume it represented, and whether the run even reached approval.”

The useful surprise: performance instrumentation also clarifies failures

This run also exposed a real limitation that the article should not hide.

The run failed because the local reviewer returned fenced JSON instead of raw JSON, and review_markdown() in src/harness_engineering/reviewer.py treated that as invalid reviewer output.

The saved findings included:

Reviewer returned non-JSON output

That is annoying, but it is also instructive.

A performance layer is not just for “fast vs slow.” It helps separate:

- model-generation cost

- reviewer-format brittleness

- approval wait time

- policy denials

- filesystem write costs

In other words, once you can attribute cost and latency at the harness-step level, you stop treating every failure as a vague “LLM issue.”

That is a big improvement in operational clarity.

What this means in practice

If I were explaining the engineering lesson in one sentence, it would be this:

Measure work at the harness boundary where the operator can act on it.

That means step-level units like:

- search step duration

- extraction step volume

- draft-generation token estimate

- review pass/fail and latency

- write size and approval boundary

Those are actionable.

By contrast, a single “request took 14 seconds” metric is not very actionable in an agent system. It tells you the user waited. It does not tell you what to change.

Cost engineering is really decision engineering

There are at least four cost decisions that a harness should eventually make explicit.

1. Which steps deserve an expensive model?

In this repo, the expensive-feeling step is clearly draft_report. That is where token volume accumulates. That is also where provider-specific latency showed up in the example run.

That suggests a future design direction: cheap planner/reviewer modes versus richer drafting modes.

2. Which steps should be merged to avoid extra round trips?

The OpenAI guidance is right here: fewer requests often matters more than shaving a small number of input tokens. A harness should know when it is paying orchestration tax for unnecessary decomposition.

3. Which context is stable enough to cache?

Anthropic’s prompt caching docs matter because they point at a harness optimization, not a prose optimization. Stable prefixes, repeated instructions, and repeated examples should be handled deliberately by the runtime.

4. Where does throughput collapse under load?

This repo is still single-run and local, so it does not answer that question yet. But the vLLM metrics work is a good reminder that throughput engineering is about saturation visibility. Once a serving system approaches saturation, latency and throughput interact in non-linear ways.

A serious harness will eventually need both per-run metrics and fleet/server metrics.

What the demo proves

1. Performance instrumentation belongs in the harness, not beside it

The repo now records timing and workload information as part of StepResult, traces, and summaries. That makes performance a first-class runtime artifact.

2. Lightweight estimates are still useful if they are honest

The token counts are approximate. They are still useful because they let operators compare relative work volume across runs and steps.

3. The slow step is often obvious once you measure at the right boundary

In the repo-backed example, draft_report dominated elapsed step time. That is the kind of fact you can optimize around.

4. Performance visibility improves failure diagnosis too

The reviewer JSON-format failure was easier to reason about because the run summary separated drafting cost from review failure from approval state.

What it still does not solve

This demo is better now, but it still does not solve:

- provider-accurate token accounting

- provider-accurate dollar cost estimation

- queueing and concurrency metrics

- throughput under multiple simultaneous runs

- saturation detection for the model server

- streaming token latency like time-to-first-token

- distinction between compute time and network time

- automatic caching or prompt-prefix reuse

- model routing by budget or latency target

- SLO enforcement

It also does not yet instrument planning and review as separate measured external steps in the same way a production harness would if those were independent model calls with strict budgets.

So this is not a cost platform. It is a practical step toward cost-aware harness design.

Honest limitations

I see four main limitations in the current implementation.

First, duration_ms is step wall-clock time, not distributed tracing. It is good enough for this demo, but it does not break down network, serialization, provider, and local processing separately.

Second, the token estimates are deliberately coarse. They come from character counts, not tokenizer truth.

Third, the repo still lacks a throughput story above one run at a time. You can reason about one run’s shape, but not yet about queueing, saturation, or multi-run contention.

Fourth, the reviewer path still has brittle parsing behavior with fenced JSON. That is a real harness problem, and the instrumentation helps expose it, but it is not fixed by this post’s change set.

The broader lesson

If you want agents that are economically usable, you need to stop asking only, “Did the model answer well?”

You also need to ask:

- How many requests did the harness make?

- Which step burned the most wall-clock time?

- How much generated output did we really ask for?

- Which steps could be cheaper or cached?

- Where did the run fail before the user got value?

- Are we measuring the unit of work that an operator can actually optimize?

That is why I keep coming back to the same thesis in this series.

Prompt engineering matters. But once you are building a real system, the bigger wins usually come from the harness:

- fewer round trips

- clearer step boundaries

- explicit approval pauses

- persisted summaries

- runtime policy

- traceable retries

- honest performance counters

The updated demo repo still keeps things intentionally small. That is a feature, not a bug. It lets the performance story stay legible.

And legibility is underrated.

If your agent stack cannot explain where the time and work went, then you do not really have performance engineering yet. You just have waiting.

References

- Live repo: https://github.com/67ailab/harness-engineering

- OpenAI, “Latency optimization”: https://developers.openai.com/api/docs/guides/latency-optimization

- Anthropic, “Prompt caching”: https://docs.anthropic.com/en/docs/build-with-claude/prompt-caching

- vLLM metrics documentation: https://docs.vllm.ai/en/stable/usage/metrics/