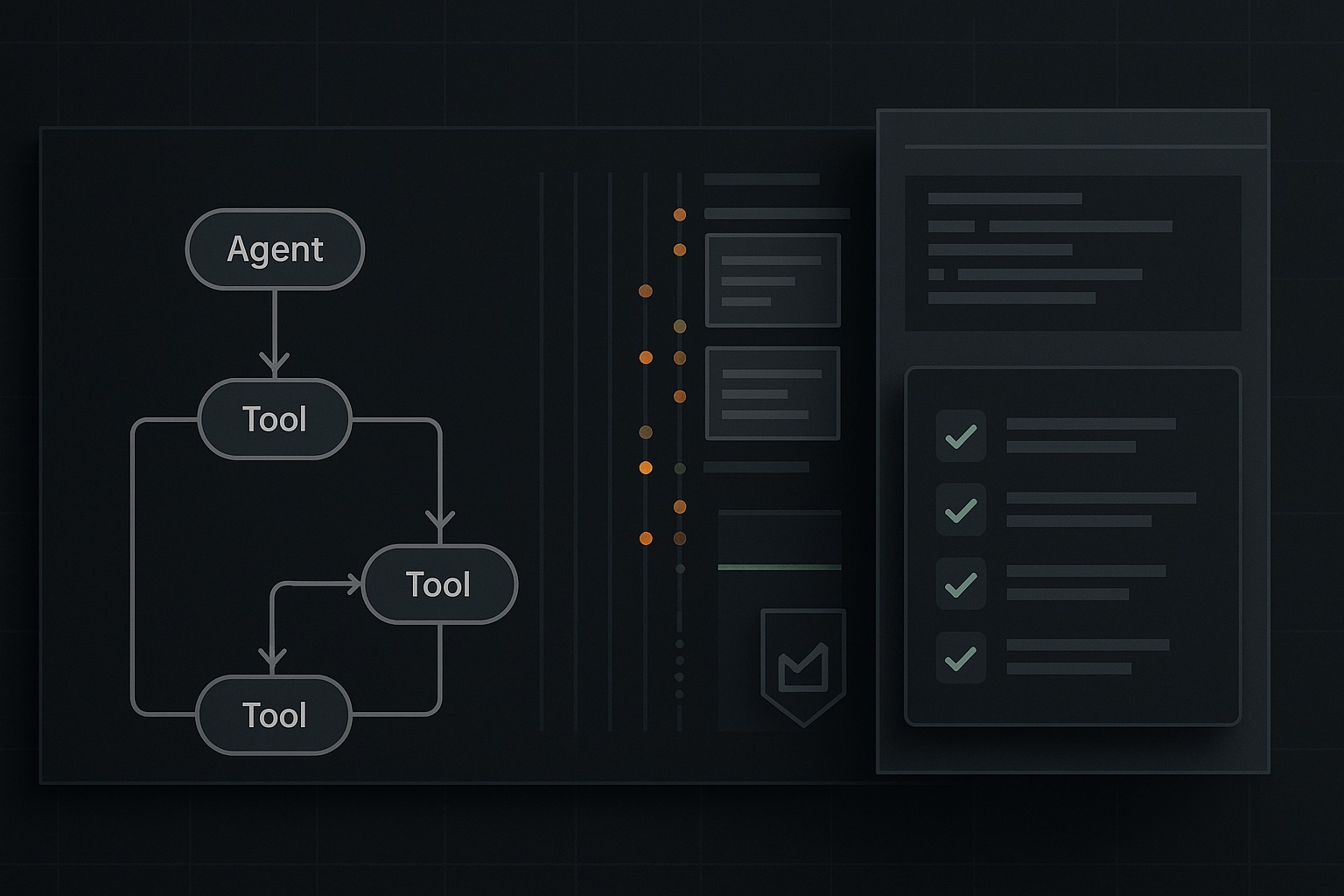

Tracing, Observability, and Evals for Agent Systems

The live demo repo for this series is 67ailab/harness-engineering, and for this post I did change the repo before publishing. The new capability shipped in commit 85c762c, which adds two concrete things the repo was missing: a persisted trace-summary surface for every run a lightweight eval runner with trace-aware fixtures The key changes are in src/harness_engineering/tracing.py, src/harness_engineering/store.py, src/harness_engineering/cli.py, and the new src/harness_engineering/evals.py module, plus starter fixtures in sample_data/evals/basic.json. That matters because a lot of agent writing still treats observability as an afterthought and evals as a benchmark spreadsheet. In practice, most production pain shows up somewhere else: ...