The live demo repo for this series is 67ailab/harness-engineering, and for this post I did change the repo before publishing. The new capability shipped in commit 85c762c, which adds two concrete things the repo was missing:

- a persisted trace-summary surface for every run

- a lightweight eval runner with trace-aware fixtures

The key changes are in src/harness_engineering/tracing.py, src/harness_engineering/store.py, src/harness_engineering/cli.py, and the new src/harness_engineering/evals.py module, plus starter fixtures in sample_data/evals/basic.json.

That matters because a lot of agent writing still treats observability as an afterthought and evals as a benchmark spreadsheet. In practice, most production pain shows up somewhere else:

- the agent paused but you do not know why

- a run failed but the trace is too raw to inspect quickly

- retries happened but nobody can see where

- the workflow reached the wrong state even though the text output looked plausible

- a demo seems fine until you ask whether the same runtime behavior happens every time

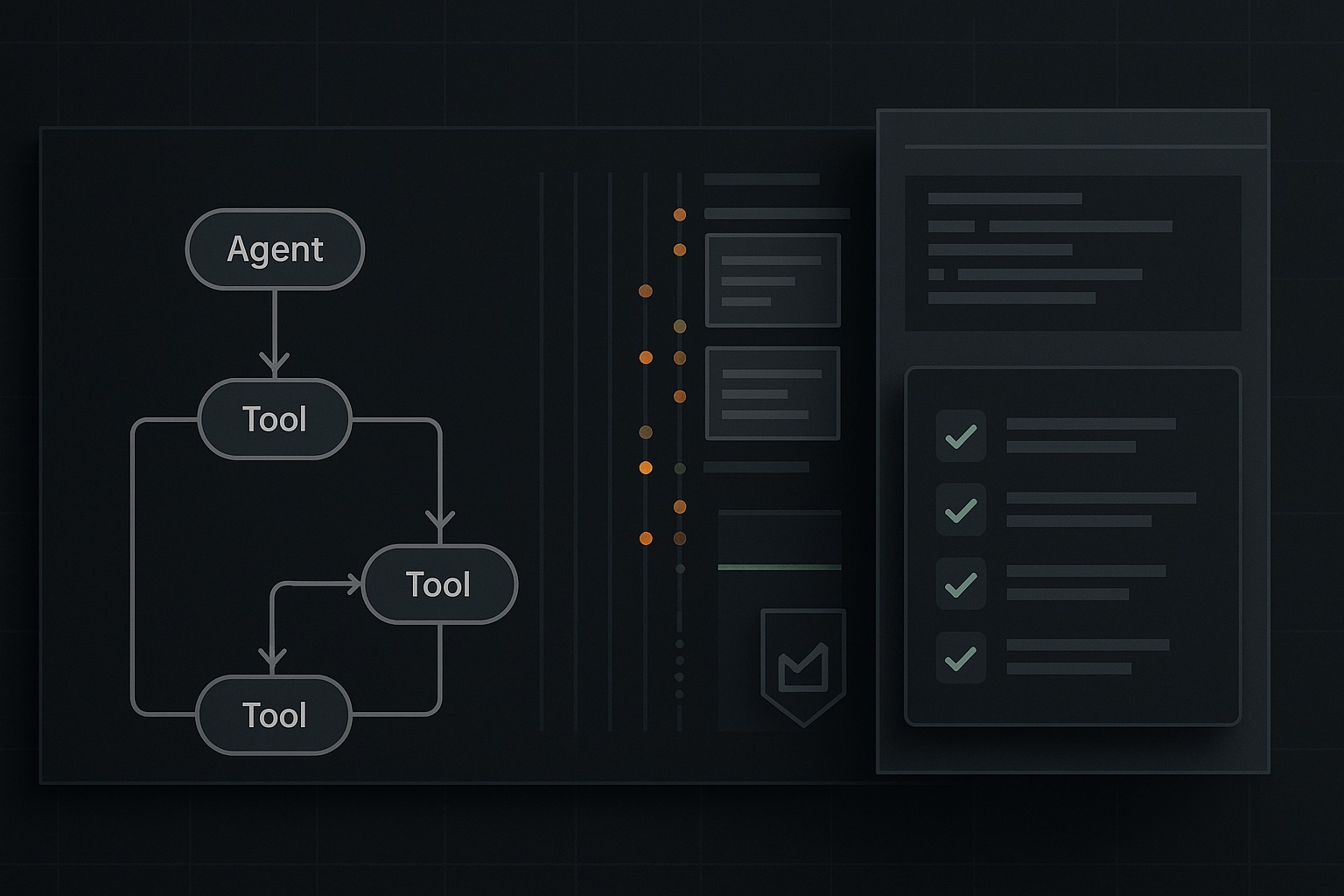

My practical claim in this post is simple: for agent systems, tracing and evals should mostly be about runtime behavior, not just output quality. If you cannot see the path a run took, and if you cannot assert that the run emitted the right events and reached the right control boundaries, you are not evaluating the harness yet. You are grading prose while the system underneath stays opaque.

This repo is still intentionally small. Good. Small code makes the observability story visible.

What changed in the repo since the previous post

Post 7 made human approval more legible by adding pending_action_details and a pending CLI command. That improved the operator-facing approval boundary. But it also made the next gap obvious.

The repo already had traces. Specifically:

add_trace()insrc/harness_engineering/tracing.py- raw trace persistence to

.runs/<run_id>/trace.jsonthroughRunStore.save()insrc/harness_engineering/store.py - history inspection through

cmd_history()insrc/harness_engineering/cli.py

That was enough to say, truthfully, that the repo stores execution traces.

It was not enough to support a strong post about observability and evals.

Why? Because raw traces alone are only half the job. Operators need a compact summary, and engineers need a repeatable way to assert that traces contain the events that define correct harness behavior.

So for Post 8 I added two concrete repo capabilities.

1. Compact trace summaries

src/harness_engineering/tracing.py now includes build_trace_summary(state).

That function rolls a saved run into a small observability payload containing:

- run status and current step

- total trace-event count

- step count

- event counts by event name

- tool counts by tool name

- retry/attempt counts by tool

- first and last event timestamps

- latest event name

- approval-gate state

- reviewer result

- final artifact presence

- any failed step results

That summary is persisted on every save because RunStore.save() in src/harness_engineering/store.py now writes:

.runs/<run_id>/trace_summary.json

RunStore also gained trace_summary_path(), and build_summary() now exposes that path alongside state, trace, summary, and memory.

On the CLI side, src/harness_engineering/cli.py now exposes:

PYTHONPATH=src python3 -m harness_engineering.cli trace-summary --latest

That is a small addition, but I think it is exactly the right kind of small. Observability should make the runtime legible without forcing every operator to read the full raw event stream first.

2. Lightweight trace-aware evals

The repo now also has a new file: src/harness_engineering/evals.py.

The important functions are:

load_eval_fixtures(path)run_eval_case(fixture, fixtures_path, runs_dir)run_eval_suite(fixtures_path, runs_dir)

The fixture format in sample_data/evals/basic.json is deliberately simple. Each case can declare things like:

topicsource_fileauto_approveexpected_statusexpected_current_steprequired_eventsmin_trace_eventsexpect_final_report

That means the evals are not trying to grade writing style. They are checking harness behavior.

A fixture can now ask questions like:

- Did the run pause at

waiting_approval? - Did it reach

doneafter approval? - Did the trace include

approval_required,approval_granted,run_resumed, andrun_completed? - Did the run produce a final artifact when it was supposed to?

That is the kind of eval surface I trust more than vague claims about “the agent looked good.”

The CLI exposes this through:

PYTHONPATH=src python3 -m harness_engineering.cli evals

Again, this is intentionally modest. It is not a full eval platform. It is a correct first move.

Why raw traces are not enough

OpenTelemetry’s tracing docs define traces as the path of a request through an application. I like that framing because it moves attention away from single events and toward the sequence and structure of work.

That matters even more for agent systems than for ordinary request/response apps.

In a normal API service, you might ask:

- which service handled the request

- which database query was slow

- where the error came from

In an agent harness, you also need to ask:

- which tool calls happened and in what order

- whether the system paused at the right control boundary

- whether retries occurred

- whether the human approval gate actually fired

- whether the run resumed from the same state or silently restarted

- whether the final side effect happened only after approval

A raw trace file can contain all of that, but it is still too low-level for fast operational understanding.

That is why build_trace_summary(state) in src/harness_engineering/tracing.py is useful. It does not replace the raw trace. It gives you the smallest roll-up that still answers the operator question:

what path did this run take, and where is it now?

I think that is a good observability design rule generally. Logs are for detail. Summaries are for orientation. You want both.

The trace vocabulary in the current demo

The nice thing about this repo is that the trace vocabulary is still small enough to reason about directly.

Across HarnessRunner.create_run(), HarnessRunner._execute(), HarnessRunner.run_until_pause_or_complete(), HarnessRunner.approve(), and HarnessRunner.resume() in src/harness_engineering/runner.py, the runner emits events like:

run_createdtool_starttool_oktool_errordraft_reviewedapproval_requiredapproval_still_requiredapproval_grantedrun_resumedrun_completed

That is already enough to reconstruct the control story of a run.

For example, the happy path of an approval-gated run should look roughly like this:

run_createdtool_start/tool_okforsearch_mocktool_start/tool_okforextract_factstool_start/tool_okfordraft_reportdraft_reviewedapproval_requiredapproval_grantedrun_resumedtool_start/tool_okforfinalize_reportrun_completed

The trace summary now compresses exactly that kind of story into counts, latest event, approval status, and failures.

That sounds obvious. It is still more honest than a lot of agent demos, because the repo exposes the path instead of skipping from prompt to final output.

A verified run from the live repo

Before writing this post, I verified the repo behavior in /home/james/.openclaw/workspace/harness-engineering.

First I ran the required checks:

make check

PYTHONPATH=src python3 -m harness_engineering.cli doctor

Those passed.

make checkran the test suite and the secret scan.- The repo now passes 27 tests.

scripts/secret_scan.pyreported no obvious secrets in tracked files.doctorsucceeded against the repo’s configured local OpenAI-compatible endpoint with:- provider:

openai_compatible - model:

gemma4 - base URL:

http://192.168.0.16:8080/v1 - status:

ok - message:

MODEL_OK

- provider:

Then I exercised the new eval runner:

PYTHONPATH=src python3 -m harness_engineering.cli evals

That ran the default suite in sample_data/evals/basic.json and returned two passing cases.

Eval case 1: approval-pause-baseline

This fixture checks the pause boundary.

It expects:

status = waiting_approvalcurrent_step = finalize_report- required trace events including

approval_required - at least a minimum trace-event count

The actual trace summary reported:

trace_events: 9steps: 3- event counts including

run_created,tool_start,tool_ok,draft_reviewed, andapproval_required approval.required: trueapproval.pending_action: finalize_report- no failures

That is exactly what I want from a harness eval. It is testing the workflow contract.

Eval case 2: end-to-end-complete

This fixture sets auto_approve: true, so it checks the full run.

It expects:

status = completedcurrent_step = done- events including

approval_grantedandrun_completed - a final report artifact on disk

The actual trace summary reported:

trace_events: 14steps: 4- event counts including

approval_required,approval_granted,run_resumed, andrun_completed approval.required: falseapproval.approved: true- a concrete

final_report_path - no failures

That is a much stronger claim than “the agent eventually wrote markdown.” It shows that the runtime traversed the intended states.

Why trace-aware evals matter more than style scoring

There is nothing wrong with output-quality evals. You still care whether the generated text is coherent and accurate.

But a harness-oriented system fails in ways that output-only grading will miss.

A run can produce a plausible final report while still violating the runtime contract:

- the risky tool might have fired without an approval gate

- the run might have restarted instead of resumed

- the retry path might be exploding silently

- the reviewer might have failed but the operator never saw it

- the wrong step might have been marked complete

None of those are mainly language-quality problems. They are harness problems.

That is why run_eval_case() in src/harness_engineering/evals.py is shaped around run state and trace evidence.

The internal helper in that function constructs checks like:

statuscurrent_stepevent:<event_name>min_trace_eventsfinal_report_exists

Then it evaluates pass/fail by aggregating those checks, not by asking a model whether the output “seems good.”

This is much closer to how I think agent-system evals should start.

Start by verifying:

- control boundaries

- state transitions

- artifact side effects

- retry behavior

- human-review paths

Then add quality evals on top.

Not the other way around.

Where this aligns with bigger frameworks

The repo is still a tiny local harness. It is not pretending to be LangGraph, the OpenAI Agents SDK, or a full OpenTelemetry implementation.

But the direction is consistent with what those systems emphasize.

OpenAI Agents SDK

The OpenAI Agents SDK docs repeatedly frame runs as resumable stateful workflows with built-in tracing, human-in-the-loop interruptions, and evaluation surfaces. Their human-in-the-loop docs make an especially important point: when a tool needs approval, the run pauses, returns interruptions, and later resumes from the same state rather than starting over.

That is the same architectural instinct this repo now demonstrates in miniature.

LangGraph

LangGraph’s overview docs describe the runtime as being about durable execution, human-in-the-loop, persistence, and debugging/observability via LangSmith.

Again, the implementation is far richer than this repo. But the conceptual stack matches:

- execution path matters

- state transitions matter

- operator inspection matters

- evaluation belongs near runtime behavior, not separate from it

OpenTelemetry

OpenTelemetry’s traces docs describe traces as the path of a request through an application, with structured events and hierarchy giving you the big picture of what happened.

This repo does not have nested spans or cross-service correlation. It should not fake that.

What it does now have is a small, structured trace vocabulary plus a summary layer. That is enough to teach the right habit: a run is not just an output. It is a path.

The practical observability rule for agent systems

If I had to compress this whole post into one engineering rule, it would be this:

observe the control flow, not just the content.

For ordinary software, people eventually learned that “did the endpoint return 200?” was not enough. You also need latency, errors, spans, retries, downstream dependencies, and state transitions.

Agent systems need the same maturity step.

Do not only ask:

- was the answer fluent?

- was the final markdown acceptable?

Also ask:

- did the risky boundary trigger?

- did the run pause where policy said it should?

- did resume happen after approval rather than before it?

- did the expected trace events appear?

- did the artifact get written only after the gate?

- did retries happen, and how many?

That is the difference between observing a chatbot and observing a workflow system.

What the demo proves

1. Raw traces become much more useful once you add a compact summary

build_trace_summary(state) is a small function, but it materially improves legibility. Operators can inspect run shape quickly before diving into trace.json.

2. Harness evals should verify runtime contracts

run_eval_case() and run_eval_suite() show that even a simple fixture format can test the things that matter operationally: state, transitions, events, and side effects.

3. A tiny local repo can still demonstrate serious observability ideas

This repo is not enterprise infrastructure. Good. It still now demonstrates a clean progression:

- raw trace persistence

- operator-facing summary

- trace-aware fixtures

- CLI surfaces for all three

That is enough to make the lesson real.

4. Approval workflows are especially good candidates for trace-based evals

The two starter fixtures prove the point nicely. One checks that the run pauses. One checks that approval plus resume plus finalize actually happen. That is a concrete, testable runtime contract.

What it still does not solve

This part matters more than the happy section.

1. The trace model is still flat and local

The repo stores event lists, not a richer span hierarchy. There is no cross-process correlation, no distributed context propagation, and no external telemetry backend.

2. The trace summary does not yet include timing or cost

build_trace_summary(state) currently reports counts and high-level state, but not per-step durations, model latency, or estimated token/cost data.

That is a real missing piece, and it will matter later in this series when we talk about cost, latency, and throughput engineering.

3. The eval fixtures are still small and deterministic

The current fixtures are good sanity checks. They are not a replay framework, not a regression corpus across many scenarios, and not a statistical eval suite.

4. Output quality is still only lightly covered

These evals check runtime behavior. They do not deeply evaluate whether the generated report is semantically strong, complete, or aligned with a domain rubric.

5. The repo still depends on local file persistence

Everything remains under .runs/. There is no external observability store, no dashboard, and no team-facing run browser.

Again: that is fine. It is a demo repo, not a platform. But it should not pretend otherwise.

What I think most agent teams should do next

If you are building an agent harness today, I think the right order of operations is:

- emit structured events for the important control boundaries

- persist enough state to inspect and resume runs

- build a compact summary over the raw trace

- write evals that assert runtime behavior

- only then obsess over fancier dashboards

Too many teams jump from “we have logs” straight to “we need a tracing product” without first defining the event vocabulary that actually matters for their harness.

In this repo, that vocabulary is still tiny, which is part of why it is useful:

- run lifecycle

- tool lifecycle

- review result

- approval gate

- resume/completion

That is already enough to drive both observability and evals.

The same principle scales upward. Better tools help, but the first job is deciding what should be observable.

The practical takeaway

A lot of agent discussions still orbit around prompts, personalities, and output polish.

Those things matter. They are not what saves you when a run pauses forever, resumes incorrectly, skips a gate, or quietly fails its workflow contract.

Tracing and evals become useful when they tell you whether the system behaved correctly, not just whether the last paragraph sounded smart.

That is why I wanted this repo change in place before publishing Post 8. The live demo now has:

- persisted raw traces

- persisted trace summaries

- a

trace-summaryCLI surface - lightweight trace-aware eval fixtures

- a real example of approval-path verification

That still does not make it a full observability stack. It does make the article honest.

And that is the bar I care about in this series.

References

- 67 AI Lab,

harness-engineeringrepository: https://github.com/67ailab/harness-engineering - OpenAI Agents SDK documentation: https://openai.github.io/openai-agents-python/

- OpenAI Agents SDK documentation, “Human-in-the-loop”: https://openai.github.io/openai-agents-js/guides/human-in-the-loop/

- OpenAI API documentation, “Guardrails and human review”: https://developers.openai.com/api/docs/guides/agents/guardrails-approvals

- LangGraph overview: https://docs.langchain.com/oss/python/langgraph/overview

- OpenTelemetry documentation, “Traces”: https://opentelemetry.io/docs/concepts/signals/traces/

- Temporal documentation: https://docs.temporal.io/