Qwen3.6-27B is one of the most interesting open models released this year—not because it is the biggest, but because it makes a strong case that mid-size dense models are now good enough to challenge much larger systems when the architecture, post-training, and inference strategy are designed well.

That matters. The industry has spent years obsessing over parameter count, but developers do not deploy parameter counts. They deploy systems that need to be accurate, fast, stable, affordable, and easy to serve. Qwen3.6-27B lands right in that sweet spot.

In this article, we will break down:

- the actual architecture details behind Qwen3.6-27B,

- how the hybrid attention design works,

- why it can be efficient despite a 262K native context window,

- and why a 27B dense model can still perform like a flagship model in coding and reasoning workloads.

The Short Version

Qwen3.6-27B works efficiently because it combines selective full attention with lighter linear-attention-style computation, keeps KV-cache costs under control, uses modern post-training for agentic coding, and adds a practical systems trick: thinking preservation, which reduces repeated reasoning across multi-turn workflows.

So the story is not just “better weights.” It is really better compute allocation:

- more expensive reasoning where global interaction matters,

- cheaper sequence processing where full quadratic attention is overkill,

- and less wasted inference on recomputing the same thought process again and again.

Official Model Overview

According to the official Qwen model card, Qwen3.6-27B has the following core specifications:

- Model type: Causal language model with vision encoder

- Parameters: 27B

- Hidden dimension: 5120

- Token embedding size: 248,320

- Layers: 64

- Context length: 262,144 tokens natively, extensible to 1,010,000 tokens

- MTP: Trained with multi-step prediction

The most important architectural detail is the model’s hidden layout:

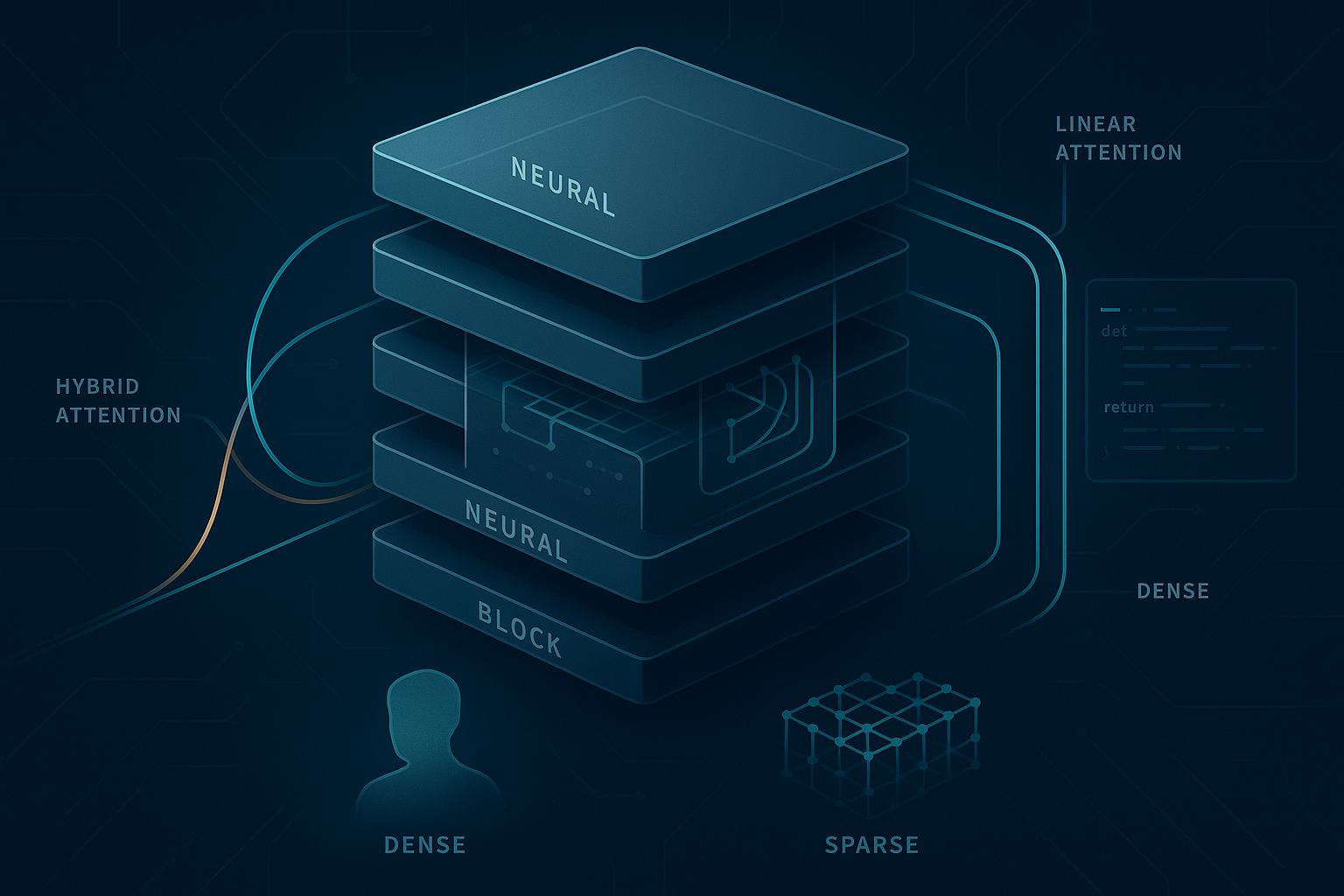

16 × (3 × (Gated DeltaNet → FFN) → 1 × (Gated Attention → FFN))

That means the 64-layer stack is organized into 16 repeated macro-blocks, and each macro-block contains:

- 3 layers of Gated DeltaNet + FFN

- followed by 1 layer of Gated Attention + FFN

This is a hybrid design. Instead of paying the cost of full attention at every layer, Qwen3.6-27B uses a cheaper sequence-mixing mechanism most of the time, then periodically inserts a stronger global attention layer.

Architecture Details That Actually Matter

1. Gated DeltaNet does the cheap, high-throughput sequence processing

The official model card reports the following for Gated DeltaNet:

- 48 linear attention heads for V

- 16 heads for QK

- Head dimension: 128

You can think of Gated DeltaNet as the “efficient backbone” of the model. Instead of using full quadratic self-attention on every token pair in every layer, it uses a more efficient update mechanism for modeling token interactions across long sequences.

Why is that useful?

Because standard attention scales poorly with context length. If you want 32K, 128K, or 262K context, quadratic attention becomes extremely expensive in both latency and memory bandwidth. A linear-attention-style component lets the model process long streams much more economically.

The gated part is important too. Gating gives the model a way to control how much information is retained, updated, or suppressed at each step. That helps the model behave less like a blunt compression machine and more like a selective memory system.

In plain English: Gated DeltaNet gives Qwen3.6-27B a cheaper way to keep track of long sequences without fully paying transformer attention costs everywhere.

2. Gated Attention is inserted periodically for global mixing

The official model card reports the following for Gated Attention:

- 24 attention heads for Q

- 4 heads for KV

- Head dimension: 256

- Rotary position embedding dimension: 64

This is the heavyweight reasoning component. Every fourth layer in the repeated macro-block uses Gated Attention instead of DeltaNet.

That is a smart compromise.

Linear attention variants are efficient, but they are not always as expressive as full attention when you need detailed token-to-token interaction, especially in coding, structured reasoning, and multi-hop context integration. By inserting a stronger attention layer at regular intervals, Qwen gets periodic opportunities to globally reconcile the sequence.

So the model alternates between:

- cheap propagation and memory updates, and

- richer global synchronization.

That is exactly the kind of design you want if your goal is strong coding performance without turning inference into a furnace.

3. Low KV head count helps memory efficiency

One subtle but very important clue is the 4 KV heads in the Gated Attention layers.

That strongly suggests a grouped-query-attention-style design choice: lots of query capacity, but fewer key/value heads to store and move around. This matters because in long-context inference, the KV cache often becomes the real bottleneck—not just parameter count.

Why fewer KV heads help:

- less memory per token in the KV cache,

- lower memory bandwidth pressure during decoding,

- better long-context serving economics,

- and often higher throughput on real hardware.

For deployment, this matters more than people admit. A model can have excellent benchmark numbers and still be annoying to serve if the KV cache is too expensive. Qwen3.6-27B seems designed with that practical constraint in mind.

4. FFN width is still substantial

The feed-forward network intermediate dimension is 17,408, which is large enough to preserve dense-model expressivity even though the architecture is aggressively optimized for efficiency.

This is important because efficiency tricks only work if you do not starve the model’s representational capacity. Qwen3.6-27B is not small in the toy sense. It is still a serious dense model with enough width and depth to encode a lot of behavior.

Why a 27B Model Can Feel Much Stronger Than “27B”

A lot of people still intuitively map model quality to parameter count. That shortcut is getting worse every quarter.

Qwen3.6-27B is strong because modern model quality is increasingly driven by the whole stack:

- architecture,

- data quality,

- post-training,

- evaluation targeting,

- inference-time behavior,

- and systems efficiency.

Qwen3.6-27B benefits from all six.

Hybrid architecture beats brute-force repetition

Using Gated DeltaNet for three layers out of every four dramatically lowers the cost of long-context sequence modeling. But by retaining periodic Gated Attention layers, the model does not give up the higher-fidelity token interaction needed for coding and reasoning.

This gives Qwen3.6-27B a better tradeoff curve than a naïve dense transformer that uses the same expensive mechanism everywhere.

Dense models still have important practical advantages

Compared with very large sparse MoE systems, a well-trained dense model can still be attractive because it is often simpler to fine-tune, simpler to quantize, simpler to serve, and more predictable in edge cases.

A 27B dense model is big enough to be powerful, but small enough that deployment is still plausible for serious teams without frontier-scale infrastructure.

Post-training is clearly optimized for real coding work

The Qwen release specifically emphasizes agentic coding, repository-level reasoning, and stronger multi-step workflows. That matters because benchmark wins do not come from architecture alone.

The official benchmark table is especially notable because Qwen3.6-27B posts very strong results in coding-oriented evaluations, including:

- SWE-bench Verified: 77.2

- SWE-bench Pro: 53.5

- SWE-bench Multilingual: 71.3

- Terminal-Bench 2.0: 59.3

- SkillsBench Avg5: 48.2

- NL2Repo: 36.2

- Claw-Eval Avg: 72.4

- Claw-Eval Pass^3: 60.6

That is not the profile of a merely efficient model. That is the profile of a model trained to actually survive tool-using, multi-step engineering tasks.

The Secret Weapon: Thinking Preservation

One of the most practical ideas in Qwen3.6 is not architectural at all. It is thinking preservation.

By default, many reasoning models generate a fresh internal chain for the latest user turn and effectively discard prior reasoning traces as first-class context. Qwen3.6 introduces an option to retain and leverage historical thinking blocks.

Why this matters:

- in iterative development, the model does not have to reconstruct the same reasoning from scratch each turn;

- in agent loops, it can preserve planning continuity across steps;

- it can reduce redundant reasoning tokens;

- and according to Qwen, it can improve KV cache utilization and reduce overhead.

This is a very underappreciated systems idea.

A lot of real-world LLM inefficiency has nothing to do with the raw forward pass. It comes from repeatedly rebuilding context and re-deriving intermediate reasoning in long workflows. If a model can preserve useful reasoning state in a controllable way, multi-turn coding becomes both faster and more coherent.

In other words, Qwen3.6-27B is not only efficient inside each layer—it is trying to be efficient across the conversation trajectory itself.

Long Context Without Completely Imploding the Cost Curve

Qwen3.6-27B supports:

- 262,144 tokens native context, and

- up to 1,010,000 tokens extended context.

That is a huge context window for a 27B dense model.

Normally, the catch with giant context windows is that they are technically available but practically painful. Latency spikes, KV cache grows fast, and long prompts become expensive enough that users quietly avoid them.

Qwen’s hybrid design appears built specifically to avoid that trap:

- DeltaNet-style layers reduce the need for full attention everywhere,

- reduced KV-head design cuts serving costs,

- and thinking preservation can lower repeated context reconstruction in ongoing sessions.

That does not make long context free. Nothing does. But it does make long context more believable as a production feature rather than just a spec-sheet flex.

Multi-Step Prediction Helps Decoding Efficiency

The model card also notes that Qwen3.6-27B is trained with MTP (multi-step prediction).

The exact benefit depends on the serving stack, but the high-level idea is straightforward: instead of optimizing only for one-token-at-a-time next-token prediction, the model is prepared for generation strategies that can better exploit speculative or multi-token decoding patterns.

That can matter in two ways:

- higher effective generation throughput under compatible inference engines,

- better alignment with real serving optimizations such as speculative decoding.

This is another example of Qwen optimizing not just for abstract model quality, but for end-to-end usefulness.

What the Benchmark Pattern Suggests

The benchmark pattern is actually more interesting than any single number.

Qwen3.6-27B is not just competitive in generic knowledge tests. Its standout profile is in coding, agentic execution, and tool-integrated workflows. That strongly suggests the model has been tuned for:

- code editing and synthesis,

- repository navigation,

- structured multi-step action,

- and maintaining coherence across longer tasks.

That is also consistent with the release messaging around front-end workflows, repository-level reasoning, and agentic utility.

If you care about practical engineering use, that is exactly where a 27B model should try to win.

So How Does Qwen3.6-27B Stay Efficient and Effective?

Here is the cleanest answer.

It is efficient because:

- it uses hybrid sequence modeling rather than full quadratic attention at every layer;

- it inserts global attention selectively instead of everywhere;

- it keeps KV-cache costs lower with a smaller KV-head design;

- it supports multi-step prediction for better serving efficiency;

- and it introduces thinking preservation to reduce repeated reasoning overhead in iterative tasks.

It is effective because:

- 27B parameters is still a lot of capacity when used well;

- the model retains periodic high-fidelity attention layers for harder reasoning and coding interactions;

- its FFN width and layer count remain substantial;

- and its post-training appears highly targeted at agentic coding and real developer workflows rather than just textbook QA.

Put differently: Qwen3.6-27B is a model that spends compute where it matters most.

That is why it feels stronger than its size class suggests.

My Take

Qwen3.6-27B is a good example of where the open-model ecosystem is heading.

The old game was simple: bigger dense model, then bigger sparse model, then bigger context, then bigger benchmark chart. The new game is more interesting. It is about how intelligently you allocate computation across architecture, memory, context, and workflow state.

Qwen3.6-27B looks like a product of that new mindset.

It is not trying to win by being the largest thing on the page. It is trying to be a model that developers can actually use for serious coding and reasoning tasks without needing absurd infrastructure. I think that is the right direction.

The most important lesson is broader than Qwen itself: mid-size models are no longer “compromise models.” If the architecture is smart enough and the post-training is targeted enough, mid-size dense systems can be some of the most practical high-performance models in the market.

References

- Qwen Team. Qwen/Qwen3.6-27B model card on Hugging Face. https://huggingface.co/Qwen/Qwen3.6-27B

- QwenLM GitHub repository. https://github.com/QwenLM/Qwen3.6

- Qwen Team release blog for Qwen3.6-27B. https://qwen.ai/blog?id=qwen3.6-27b