Qwen3.6-27B Deep Dive: Why This Mid-Size Dense Model Works So Well

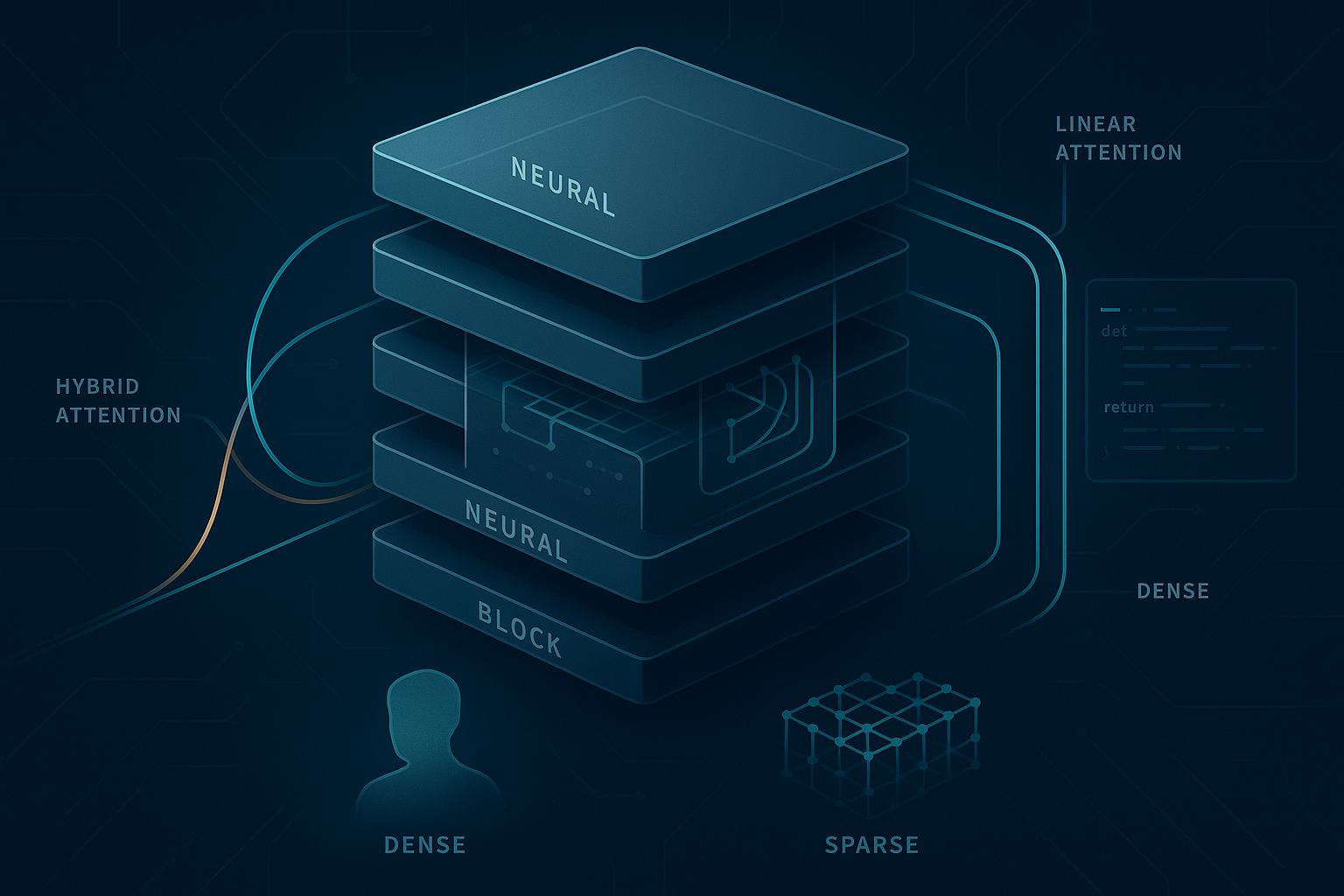

Qwen3.6-27B is one of the most interesting open models released this year—not because it is the biggest, but because it makes a strong case that mid-size dense models are now good enough to challenge much larger systems when the architecture, post-training, and inference strategy are designed well. That matters. The industry has spent years obsessing over parameter count, but developers do not deploy parameter counts. They deploy systems that need to be accurate, fast, stable, affordable, and easy to serve. Qwen3.6-27B lands right in that sweet spot. ...