The LLM Efficiency Revolution: How 8B Models Now Outperform 70B Giants

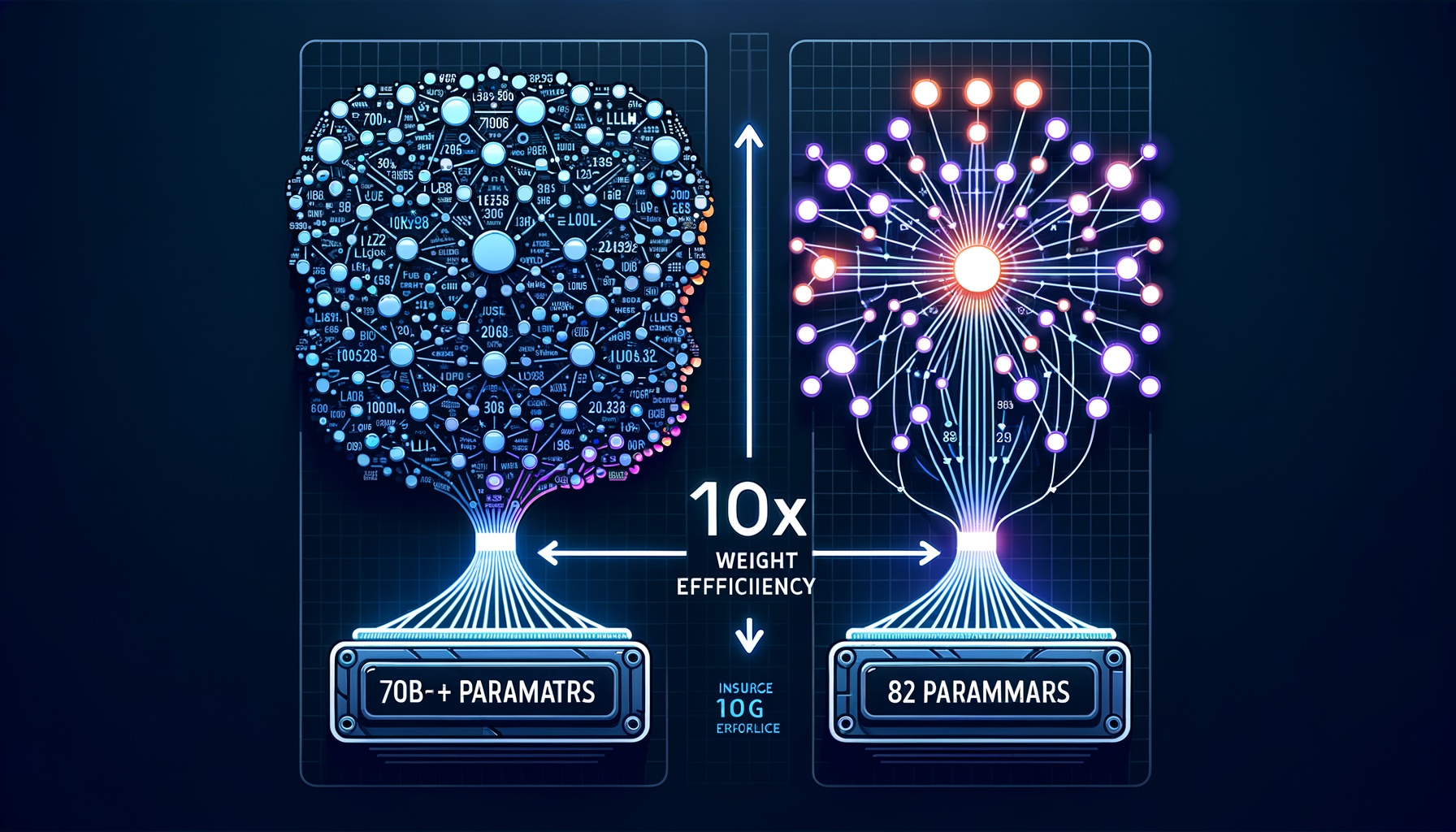

We are witnessing a massive paradigm shift in large language model development. A couple of years ago, the primary strategy to make an LLM smarter was simply to throw more parameters and raw compute at it. Today, models in the 7B to 8B parameter range easily outperform the 70B+ models of the past. This leap in “weight efficiency” isn’t happening by accident or mere trial and error. It is driven by highly deliberate, scientifically grounded methodologies across the entire training pipeline. ...