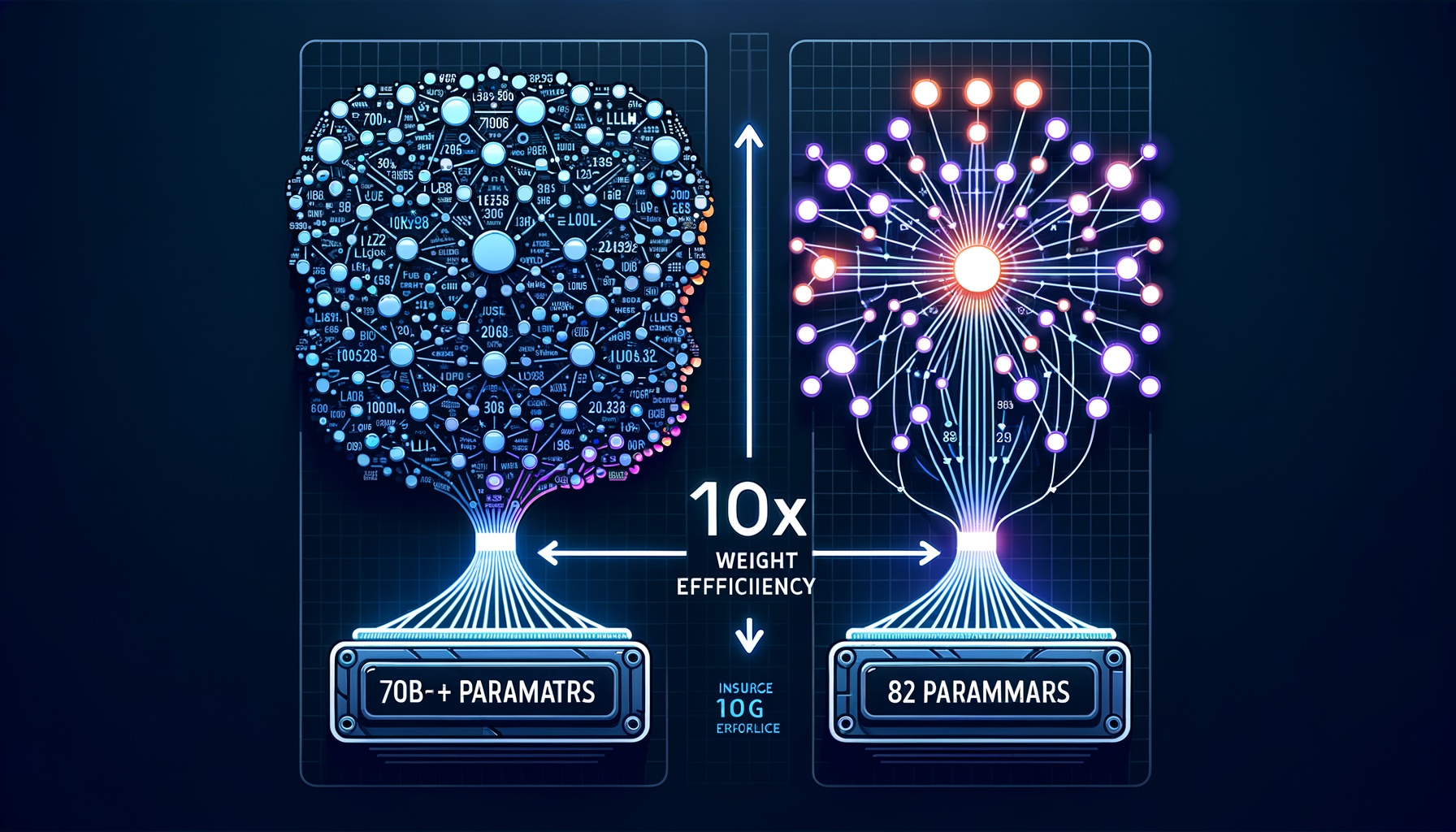

We are witnessing a massive paradigm shift in large language model development. A couple of years ago, the primary strategy to make an LLM smarter was simply to throw more parameters and raw compute at it. Today, models in the 7B to 8B parameter range easily outperform the 70B+ models of the past.

This leap in “weight efficiency” isn’t happening by accident or mere trial and error. It is driven by highly deliberate, scientifically grounded methodologies across the entire training pipeline.

The Six Pillars of Modern LLM Efficiency

1. Radically Better Data Quality (Pre-training)

The phrase “garbage in, garbage out” has never been more relevant. In the past, models were trained on massive, unfiltered web scrapes. Now, data engineering is arguably the most critical component of model intelligence.

Aggressive Filtering and Deduplication: Removing redundant or low-quality data ensures the model doesn’t waste its “memory capacity” (weights) memorizing boilerplate text.

Heuristic & Model-Based Curation: AI companies now use smaller, highly tuned models as classifiers to score and filter the training data for educational value, factual density, and reasoning quality before it ever reaches the main model.

Curriculum Learning: Instead of feeding data randomly, data is presented in a structured progression—starting with basic concepts and moving to highly complex reasoning, much like how a human learns in school.

2. Overtraining (Extended Compute-Optimal Training)

In 2022, the “Chinchilla Scaling Laws” suggested an optimal ratio of parameters to training data (roughly 20 tokens per parameter). However, researchers discovered that if inference costs are your primary constraint, you can train a small model on vastly more data than Chinchilla suggests.

By training a smaller model (e.g., 8 billion parameters) on a massive corpus (e.g., 15 trillion tokens), the model is forced to heavily compress concepts, leading to much richer, denser representations and higher intelligence for its size.

3. Advanced Alignment and Post-Training

Pre-training just teaches a model to predict the next word. Post-training is where the model is taught how to actually behave, reason, and answer questions.

Supervised Fine-Tuning (SFT): Using thousands of meticulously crafted, human-written examples of perfect reasoning to teach the model how to construct an answer.

Direct Preference Optimization (DPO) / RLHF: Instead of just mimicking text, the model generates multiple answers, and a reward system (either human graders or an AI judge) penalizes bad reasoning and rewards good reasoning. DPO mathematically bakes this preference directly into the model without needing a complex reinforcement learning setup.

4. Synthetic Data and Self-Play

We are running out of high-quality human data. To bridge the gap, researchers use “frontier models” (the massive, smartest models) to generate pristine training data for smaller models.

Constitutional AI / RLAIF: Models are given a set of rules (a constitution) and use it to critique and rewrite their own outputs, training themselves to be better.

Reasoning Bootstrapping: A large model generates millions of step-by-step math or logic solutions. The smaller model is trained on these synthetic traces, essentially absorbing the reasoning skills of the larger model.

5. Architectural Efficiencies

While the core Transformer architecture remains dominant, targeted tweaks have vastly improved how much “intelligence” fits into a given parameter budget.

Mixture of Experts (MoE): Instead of a dense network where every weight is used for every word, MoE breaks the model into specialized sub-networks (experts). The model routes a physics question to the “math/physics expert” and a coding question to the “code expert.” This allows the model to have a huge amount of knowledge while only using a small fraction of its parameters at any given moment.

Attention Tweaks (MQA/GQA): Grouped-Query Attention frees up the model’s memory bandwidth, allowing it to “think” faster and handle much larger contexts (reading whole books) without running out of memory.

6. Inference-Time Compute (System 2 Thinking)

The most recent leap in intelligence comes from changing how models generate answers after they are trained. Instead of blurting out the first word that comes to mind (System 1 thinking), models are now trained to use inference-time compute.

Techniques like Chain-of-Thought (CoT), Monte Carlo Tree Search (MCTS), and hidden reasoning phases (like OpenAI’s o1 models) allow the model to search through multiple potential solution paths, correct its own mistakes, and verify its logic before showing you the final answer.

The Bottom Line

The era of “bigger is better” is over. We’ve entered the age of smarter, not larger. The next breakthroughs won’t come from counting parameters—they’ll come from better data, better training strategies, better architectures, and better reasoning at inference time.

This is excellent news for everyone: more capable AI at lower costs, faster inference, and the potential to run powerful models on consumer hardware. The democratization of AI intelligence is no longer a distant dream—it’s happening now.